vendure-data-hub-plugin

Configuration Options

Complete reference for all Data Hub plugin configuration options.

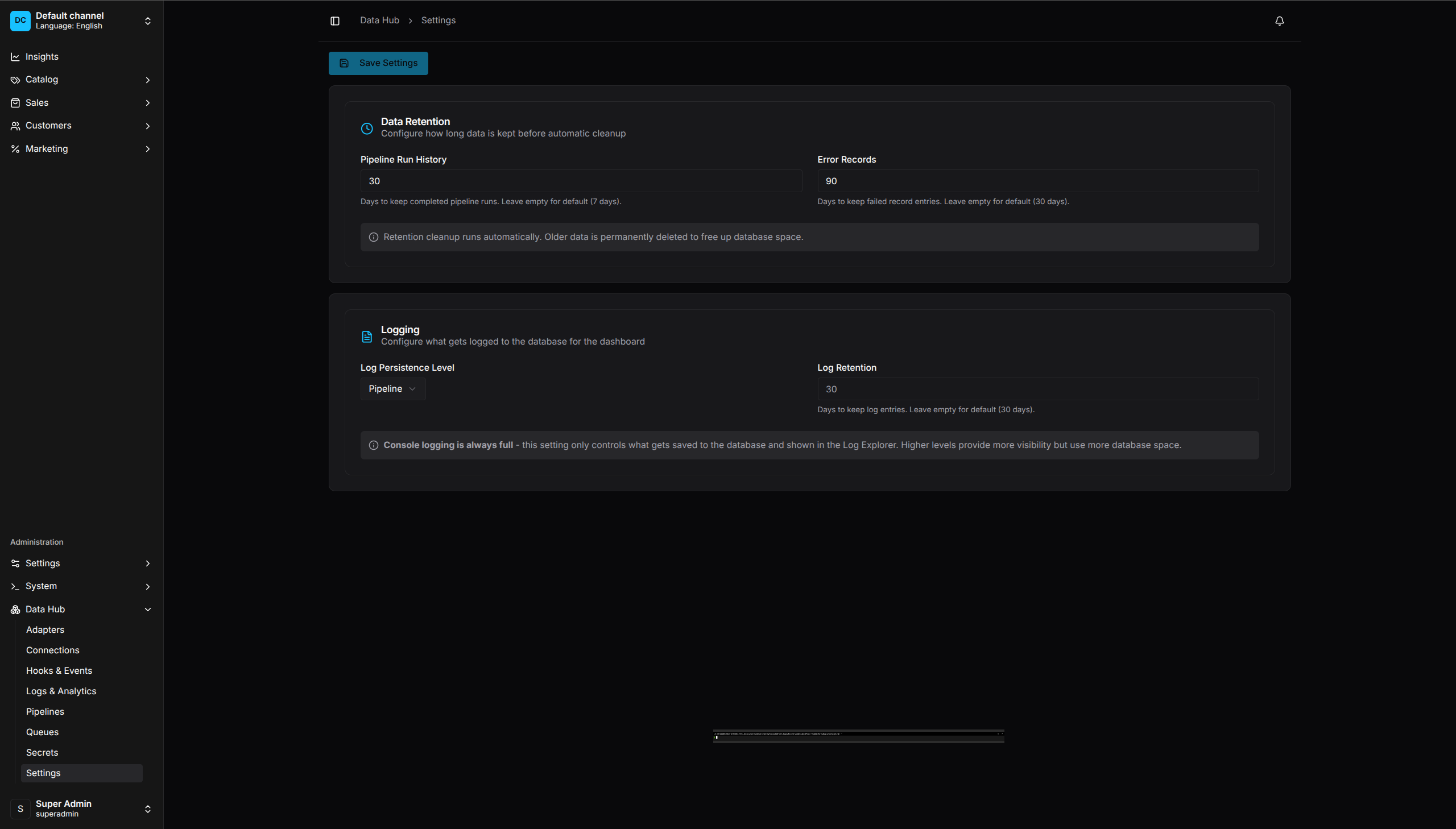

Settings UI - Configure data retention and logging options

Plugin Options

DataHubPlugin.init({

// Core settings

enabled: true,

registerBuiltinAdapters: true,

debug: false,

enableDashboard: true,

// Retention

retentionDaysRuns: 30,

retentionDaysErrors: 90,

// Code-first configuration

pipelines: [],

secrets: [],

connections: [],

adapters: [],

feedGenerators: [],

importTemplates: [],

exportTemplates: [],

scripts: {},

configPath: undefined,

// Runtime configuration

runtime: {

batch: { size: 50, bulkSize: 100 },

http: { timeoutMs: 30000, maxRetries: 3 },

},

// Security configuration

security: {

ssrf: { /* SSRF protection settings */ },

script: { enabled: true },

},

// Notification settings (for gate approval emails)

notifications: {

smtp: { host: 'smtp.example.com', port: 587, /* ... */ },

},

})

Option Reference

enabled

| Type | boolean |

| Default | true |

| Description | Enable or disable the plugin entirely |

DataHubPlugin.init({

enabled: process.env.DATAHUB_ENABLED !== 'false',

})

registerBuiltinAdapters

| Type | boolean |

| Default | true |

| Description | Register built-in extractors, operators, and loaders |

Set to false if you want to register only custom adapters.

debug

| Type | boolean |

| Default | false |

| Description | Enable detailed debug logging |

DataHubPlugin.init({

debug: process.env.NODE_ENV !== 'production',

})

enableDashboard

| Type | boolean |

| Default | true |

| Description | Enable or disable the Data Hub dashboard UI |

DataHubPlugin.init({

enableDashboard: true,

})

retentionDaysRuns

| Type | number |

| Default | 30 |

| Description | Days to keep pipeline run history |

Old runs are deleted automatically by the retention job.

retentionDaysErrors

| Type | number |

| Default | 90 |

| Description | Days to keep error records |

Quarantined records older than this are deleted.

pipelines

| Type | CodeFirstPipeline[] |

| Default | [] |

| Description | Code-first pipeline definitions |

interface CodeFirstPipeline {

code: string;

name: string;

description?: string;

enabled?: boolean;

definition: PipelineDefinition;

tags?: string[];

}

secrets

| Type | CodeFirstSecret[] |

| Default | [] |

| Description | Code-first secret definitions |

interface CodeFirstSecret {

code: string;

provider: 'INLINE' | 'ENV';

value: string;

metadata?: Record<string, any>;

}

connections

| Type | CodeFirstConnection[] |

| Default | [] |

| Description | Code-first connection definitions |

interface CodeFirstConnection {

code: string; // Unique connection identifier

type: string; // Connection type (e.g., 'postgres', 'mysql', 'rest', 's3')

name: string; // Human-readable name

settings: JsonObject; // Connection settings - supports env var references like ${DB_HOST}

}

adapters

| Type | AdapterDefinition[] |

| Default | [] |

| Description | Custom adapter registrations |

interface AdapterDefinition {

readonly type: 'EXTRACTOR' | 'OPERATOR' | 'LOADER' | 'VALIDATOR' | 'ENRICHER' | 'EXPORTER' | 'FEED' | 'SINK' | 'TRIGGER';

readonly code: string;

readonly name?: string;

readonly description?: string;

readonly category?: string;

readonly schema: StepConfigSchema;

readonly pure?: boolean; // For operators: whether side-effect free

readonly async?: boolean;

readonly batchable?: boolean;

readonly requires?: readonly string[];

readonly icon?: string;

readonly version?: string;

readonly deprecated?: boolean;

readonly experimental?: boolean;

readonly entityType?: string; // For loaders: Vendure entity type

readonly formatType?: string; // For exporters/feeds: output format

readonly patchableFields?: readonly string[];

readonly editorType?: string; // For operators: 'map' | 'template' | 'filter'

readonly summaryTemplate?: string; // For operators: e.g. "${from} → ${to}"

}

Example:

DataHubPlugin.init({

adapters: [myCustomExtractor, currencyConvertOperator],

})

See Extending the Plugin for detailed documentation on creating custom adapters.

feedGenerators

| Type | CustomFeedGenerator[] |

| Default | [] |

| Description | Custom feed generator registrations |

DataHubPlugin.init({

feedGenerators: [

myCustomFeedGenerator,

],

})

runtime

| Type | RuntimeLimitsConfig |

| Default | See below |

| Description | Runtime configuration for batch processing, HTTP, circuit breaker, etc. |

interface RuntimeLimitsConfig {

batch?: {

size?: number; // Default batch size (default: 50)

bulkSize?: number; // Bulk operation size (default: 100)

maxInFlight?: number; // Max concurrent operations (default: 5)

rateLimitRps?: number; // Requests per second (default: 10)

};

http?: {

timeoutMs?: number; // Request timeout (default: 30000)

maxRetries?: number; // Max retry attempts (default: 3)

retryDelayMs?: number; // Initial retry delay (default: 1000)

retryMaxDelayMs?: number; // Max retry delay (default: 30000)

exponentialBackoff?: boolean; // Enable exponential backoff (default: true)

backoffMultiplier?: number; // Backoff multiplier (default: 2)

};

circuitBreaker?: {

enabled?: boolean; // Enable circuit breaker (default: true)

failureThreshold?: number; // Failures before opening (default: 5)

successThreshold?: number; // Successes to close (default: 3)

resetTimeoutMs?: number; // Time before reset attempt (default: 30000)

};

connectionPool?: {

min?: number; // Min connections (default: 1)

max?: number; // Max connections (default: 10)

idleTimeoutMs?: number; // Idle timeout (default: 30000)

};

pagination?: {

maxPages?: number; // Max pages to fetch (default: 100)

pageSize?: number; // Default page size (default: 100)

databasePageSize?: number; // Database page size (default: 1000)

};

scheduler?: {

checkIntervalMs?: number; // Cron check interval (default: 30000)

refreshIntervalMs?: number; // Cache refresh interval (default: 60000)

};

}

Example:

DataHubPlugin.init({

runtime: {

batch: { size: 100, maxInFlight: 10 },

http: { timeoutMs: 60000, maxRetries: 5 },

circuitBreaker: { failureThreshold: 10 },

},

})

security

| Type | SecurityConfig |

| Default | See below |

| Description | Security configuration for SSRF protection and script execution |

interface SecurityConfig {

ssrf?: UrlSecurityConfig; // SSRF protection settings

script?: ScriptSecurityConfig; // Script operator security settings

}

interface ScriptSecurityConfig {

enabled?: boolean; // Enable script operators (default: true)

validation?: { // Code validation settings

maxExpressionLength?: number;

// ... other validation options

};

maxCacheSize?: number; // Max cached expressions (default: 1000)

defaultTimeoutMs?: number; // Script timeout (default: 5000)

enableCache?: boolean; // Enable expression caching (default: true)

}

Example:

DataHubPlugin.init({

security: {

script: {

enabled: true,

defaultTimeoutMs: 10000,

},

},

})

notifications

| Type | { smtp?: NotificationSmtpConfig } |

| Default | undefined |

| Description | SMTP settings for gate approval notification emails |

interface NotificationSmtpConfig {

host: string;

port: number;

secure?: boolean; // Defaults to true for port 465

auth?: { user: string; pass: string };

from?: string; // Sender address

}

Example:

DataHubPlugin.init({

notifications: {

smtp: {

host: 'smtp.example.com',

port: 587,

auth: { user: 'notifications@example.com', pass: process.env.SMTP_PASS! },

from: 'DataHub <notifications@example.com>',

},

},

})

When configured, GATE steps with notifyEmail will send approval notification emails via this SMTP server. Without this configuration, email notifications are skipped with a warning.

importTemplates

| Type | CustomImportTemplate[] |

| Default | Built-in templates (REST API Sync, JSON Import, Magento CSV, XML Feed, ERP Inventory, CRM Customer) |

| Description | Custom import templates for the import wizard |

interface CustomImportTemplate {

id: string;

name: string;

description: string;

category: string; // 'products' | 'customers' | 'inventory' | 'catalog'

icon?: string; // lucide-react icon name

requiredFields: string[];

optionalFields?: string[];

featured?: boolean;

tags?: string[];

formats?: string[]; // 'CSV' | 'JSON' | 'XML' | 'API'

definition?: {

sourceType?: string;

fileFormat?: string;

targetEntity?: string;

existingRecords?: string;

lookupFields?: string[];

fieldMappings?: { sourceField: string; targetField: string }[];

};

}

Including Connector Templates

Connectors (like Pimcore) ship their own templates. Built-in export templates are served automatically by the TemplateRegistryService. To include connector templates in the wizard, pass them via plugin options:

import { PimcoreConnector } from '@oronts/vendure-data-hub-plugin/connectors/pimcore';

import { DEFAULT_IMPORT_TEMPLATES } from '@oronts/vendure-data-hub-plugin';

DataHubPlugin.init({

importTemplates: [

...DEFAULT_IMPORT_TEMPLATES,

...(PimcoreConnector.importTemplates ?? []),

],

exportTemplates: [

...(PimcoreConnector.exportTemplates ?? []),

],

})

If using ConnectorRegistry with multiple connectors:

const connectorTemplates = registry.getPluginTemplates();

DataHubPlugin.init({

importTemplates: [...DEFAULT_IMPORT_TEMPLATES, ...connectorTemplates.importTemplates],

exportTemplates: connectorTemplates.exportTemplates,

})

exportTemplates

| Type | CustomExportTemplate[] |

| Default | Built-in templates (Product XML Feed, Order Analytics CSV, Customer GDPR Export, Inventory Report) |

| Description | Custom export templates for the export wizard |

interface CustomExportTemplate {

id: string;

name: string;

description: string;

icon?: string;

format: string;

requiredFields?: string[];

tags?: string[];

definition?: {

sourceEntity?: string;

fields?: string[];

formatOptions?: Record<string, unknown>;

};

}

scripts

| Type | Record<string, ScriptFunction> |

| Default | undefined |

| Description | Named script functions for use in pipeline hook actions |

Scripts registered via plugin options are auto-registered on startup and can be referenced by name in SCRIPT hook actions within pipeline definitions.

type ScriptFunction = (

records: readonly JsonObject[],

context: HookContext,

args?: JsonObject,

) => Promise<JsonObject[]> | JsonObject[];

Example:

DataHubPlugin.init({

scripts: {

'validate-sku': async (records, context) => {

return records.filter(r => r.sku && String(r.sku).length > 0);

},

'enrich-pricing': async (records, context) => {

return records.map(r => ({ ...r, priceInCents: Number(r.price) * 100 }));

},

},

})

Use in pipeline definitions:

hooks: {

AFTER_EXTRACT: [{ type: 'SCRIPT', scriptName: 'validate-sku' }],

BEFORE_LOAD: [{ type: 'SCRIPT', scriptName: 'enrich-pricing', args: { currency: 'USD' } }],

}

Scripts have full access to the HookContext (pipelineId, runId, stage) and optional args passed from the hook action. They can filter, transform, enrich, or reject records.

configPath

| Type | string |

| Default | undefined |

| Description | Path to external config file |

Load configuration from YAML or JSON file:

DataHubPlugin.init({

configPath: './config/data-hub.yaml',

})

Environment Variables

Use environment variables in configurations:

In Secrets

secrets: [

{ code: 'api-key', provider: 'ENV', value: 'MY_API_KEY' },

]

In Connections

Use ${VAR} syntax:

connections: [

{

code: 'db',

type: 'postgres',

settings: {

host: '${DB_HOST}',

password: '${DB_PASSWORD}',

},

},

]

External Config File

YAML Format

# data-hub.yaml

secrets:

- code: supplier-api

provider: ENV

value: SUPPLIER_API_KEY

connections:

- code: erp-db

type: postgres

name: ERP Database

settings:

host: ${ERP_DB_HOST}

port: 5432

database: erp

username: ${ERP_DB_USER}

password: ${ERP_DB_PASSWORD}

pipelines:

- code: product-sync

name: Product Sync

enabled: true

definition:

version: 1

steps:

- key: trigger

type: TRIGGER

config:

type: SCHEDULE

cron: "0 2 * * *"

JSON Format

{

"secrets": [

{ "code": "api-key", "provider": "env", "value": "API_KEY" }

],

"connections": [],

"pipelines": []

}

Runtime Settings

These settings can be changed via Admin UI or GraphQL:

| Setting | Description |

|---|---|

retentionDaysRuns |

Run history retention |

retentionDaysErrors |

Error retention |

retentionDaysLogs |

Log retention |

logPersistenceLevel |

Minimum log level to persist |

mutation {

updateDataHubSettings(input: {

retentionDaysRuns: 60

retentionDaysErrors: 90

logPersistenceLevel: "info"

}) {

retentionDaysRuns

retentionDaysErrors

}

}

Job Queue Configuration

Configure Vendure’s job queue for pipeline execution:

// vendure-config.ts

export const config: VendureConfig = {

jobQueueOptions: {

activeQueues: ['default', 'data-hub.run', 'data-hub.schedule'],

pollInterval: 1000,

},

};

Queue Names

| Queue | Purpose |

|---|---|

data-hub.run |

Pipeline execution jobs |

data-hub.schedule |

Schedule checking jobs |

Worker Scaling

For high-volume pipelines, run dedicated workers:

// worker.ts

import { bootstrapWorker } from '@vendure/core';

import config from './vendure-config';

bootstrapWorker(config)

.then(worker => worker.startJobQueue())

.then(() => console.log('Worker started'));

Example Configurations

Development

DataHubPlugin.init({

enabled: true,

debug: true,

retentionDaysRuns: 7,

secrets: [

{ code: 'test-api', provider: 'INLINE', value: 'test-key' },

],

})

Production

DataHubPlugin.init({

enabled: true,

debug: false,

retentionDaysRuns: 30,

retentionDaysErrors: 90,

configPath: './config/data-hub.yaml',

})

Multi-Environment

const isProd = process.env.NODE_ENV === 'production';

DataHubPlugin.init({

enabled: true,

debug: !isProd,

retentionDaysRuns: isProd ? 30 : 7,

secrets: [

{ code: 'api-key', provider: 'ENV', value: 'API_KEY' },

],

connections: [

{

code: 'main-db',

type: 'postgres',

name: isProd ? 'Production DB' : 'Dev DB',

settings: {

host: '${DB_HOST}',

database: '${DB_NAME}',

},

},

],

})